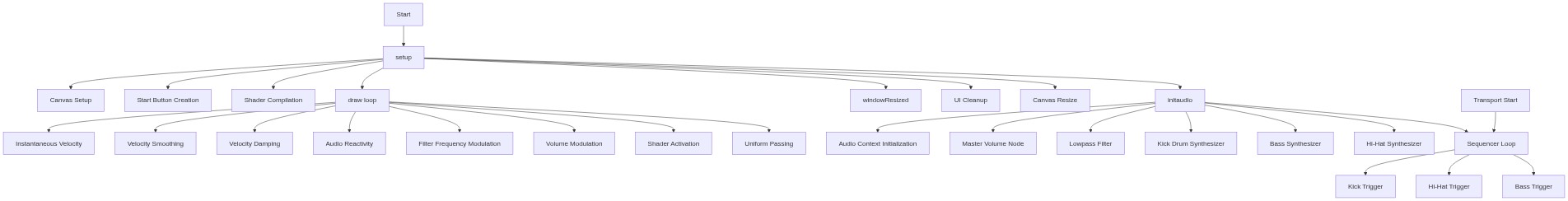

setup()

setup() runs once when the sketch starts. In this sketch, it initializes the WebGL canvas, creates UI elements, and compiles the shaders that will render the chrome sphere. The button requires a user gesture to start audio due to browser security policies.

function setup() {

createCanvas(windowWidth, windowHeight, WEBGL);

// Create UI overlay to trigger audio context

startBtn = createButton('ENTER EXPERIENCE');

startBtn.id('start-btn');

startBtn.mousePressed(initAudio);

// Create instructions text (hidden initially)

instructions = createDiv('MOVE MOUSE / TOUCH TO SHAPE THE METAL & MODULATE THE BEAT');

instructions.id('instructions');

// Create creator credit text

creditText = createDiv('MADE BY CORBUN');

creditText.id('credit');

liquidShader = createShader(vertShader, fragShader);

noStroke();

}🔧 Subcomponents:

createCanvas(windowWidth, windowHeight, WEBGL)

Creates a fullscreen WebGL canvas that fills the entire window

startBtn = createButton('ENTER EXPERIENCE')

Creates an interactive button to trigger audio initialization

liquidShader = createShader(vertShader, fragShader)

Compiles the vertex and fragment shaders into a WebGL shader program

Line by Line:

createCanvas(windowWidth, windowHeight, WEBGL)- Creates a WebGL-enabled canvas that fills the entire window. WEBGL enables 3D graphics and shader support.

startBtn = createButton('ENTER EXPERIENCE')- Creates a clickable button that will trigger the audio initialization when pressed.

startBtn.id('start-btn')- Assigns a CSS ID to the button so it can be styled with the rules in style.css.

startBtn.mousePressed(initAudio)- Connects the button click event to the initAudio() function, which starts the audio context and music.

instructions = createDiv('MOVE MOUSE / TOUCH TO SHAPE THE METAL & MODULATE THE BEAT')- Creates a text element that displays instructions to the user.

creditText = createDiv('MADE BY CORBUN')- Creates a text element that credits the original creator.

liquidShader = createShader(vertShader, fragShader)- Compiles the vertex shader and fragment shader strings into a working WebGL shader program stored in liquidShader.

noStroke()- Disables stroke outlines for all shapes drawn in this sketch.